Generative AI Cybersecurity: Where it Helps and Where it Can Hurt

Generative AI cybersecurity is already part of real cyber security work. On the good side, security teams use generative AI tools to summarize alerts, sort security data, speed up incident response, and reduce some routine tasks. On the bad side, the same tech helps malicious actors write better lures, test malicious code, and scale phishing attacks faster.

The UK’s NCSC says AI will almost certainly make parts of cyber intrusion more effective and efficient, while Canada’s Cyber Centre says GenAI can also help defenders scan large datasets, identify potential threats, and reduce false positives.

So the real question is not whether generative AI belongs in security. It already does. So the real question is not whether generative AI belongs in security. It already does. The bottom line is how generative AI is being deployed, where it is really assisting and where it is quietly generating new security threats. We will discover both sides, and then l demonstrate where a VPN, such as VeePN, would fit into the picture.

Generative AI cybersecurity: what it actually means in practice

When people hear generative AI, they usually picture chatbots. But the field is broader than that. It includes large language models, other AI models, and older approaches such as generative adversarial networks, which NIST defines as a framework that learns to generate new data with the same statistics as the training set. In security work, that means systems that can generate text, code, summaries, or synthetic data, not just answer questions in a chat box.

In day-to-day work, cybersecurity generative AI is best understood as an assistant layer on top of existing tools. It can help with threat detection, first-pass triage, report writing, search, and pattern spotting across huge volumes of logs and alerts. It is useful because modern cybersecurity operations involve analyzing vast amounts of data points, and people simply do not have infinite time.

Here is where it is already useful:

- Threat detection and triage. GenAI can help cybersecurity teams scan logs, group alerts, and identify potential threats faster than a person reading everything by hand. Canada’s Cyber Centre specifically notes that AI can help practitioners scan large datasets and minimize false positives, which is a real gain in busy security operations.

- Threat intelligence summarization. This is one of the easiest wins. A model can read long feeds, pull out key points, and draft a plain-English summary for security professionals who need quick context. OpenAI says it is using AI as a force multiplier for its investigative teams while also disrupting malicious uses tied to social engineering, cyber espionage, scams, and other malicious cyber activity.

- Software development and vulnerability assessment. Security teams are also using GenAI to review code, explain flaws, and suggest fixes. CISA’s own AI use-case material includes penetration-testing software that leverages generative AI to provide remediation guidance, which shows how implementing generative AI can support both developers and defenders.

- Model training with safer inputs. Some organizations use synthetic data during training AI models so they do not need to expose real personally identifiable information or other confidential information in every workflow. That can be useful, but only if the underlying data collection and testing practices are sound.

That is the productive side of the story. Now let’s look at where the trouble starts.

Generative AI in cybersecurity and enhancing threat detection

This is where cybersecurity AI really helps most. It handles the first round of review by sorting alerts, summarizing issues, and flagging suspicious patterns. That saves you hours and lets analysts focus on what matters.

But we should keep this grounded. AI is not meant to replace experienced analysts. It helps them get through repetitive work in less time, so they can focus on tougher decisions, unusual activity, and more serious investigations.

A few strong use cases stand out:

- Enhancing threat detection with faster pattern matching. AI can help spot unusual behavior, support anomaly detection, and strengthen advanced threat detection when the signal is buried inside too much data. That matters because many security systems are noisy by default.

- Faster incident response. A model can draft an initial timeline, summarize affected assets, and suggest containment steps. It should not be trusted blindly, but it can help teams move faster in the first minutes of an incident.

- More room for human work. When AI handles routine tasks, human cybersecurity experts can focus on threat hunting, complex cases, and decisions that need context, skepticism, and experience. This is where human capabilities still matter most.

So yes, generative AI in cybersecurity can be a powerful tool. But that only holds up if the system is trained well, tested well, and watched closely.

Generative AI cybersecurity risks that deserve real attention

This is the part companies often rush past. The same artificial intelligence that helps defenders can also open new attack vectors and make old ones worse. The risk is not just one dramatic breach. It is a mix of weak controls, rushed adoption, bad model training, and too much trust in machine output.

The biggest generative AI cybersecurity risks usually look like this:

- Sensitive data leakage. Employees paste internal notes, code, customer records, or other sensitive information into public tools. Users can unknowingly provide corporate data or personally identifiable information in prompts, which can create privacy, IP, and compliance problems.

- Prompt injection and insecure output handling. OWASP lists prompt injection, insecure output handling, and sensitive information disclosure among the top risks for LLM applications. In plain English, a model can be manipulated into revealing data, following bad instructions, or passing unsafe output into other systems.

- Poisoned training data. NIST warns that attackers can tamper with training inputs or datasets so AI systems misclassify threats or behave in unintended ways. That can damage trust, create blind spots, and weaken your security posture at exactly the wrong moment.

- Better phishing and deeper social engineering. This is already showing up in the wild. Google says threat actors are using AI for reconnaissance, social engineering, and malware development, while Microsoft warns that AI-enhanced social engineering is making campaigns more persuasive and scalable. That means phishing attacks and other forms of manipulation are getting cleaner, faster, and harder to spot.

- Faster malware iteration. Google’s reporting shows attackers using AI for malware-related work, and Microsoft warns that AI could support more autonomous malware behavior in the future. That does not mean one-click fully automated cyber attacks, but it does mean threat actors can test, rewrite, and try to evade detection faster than before.

- Too much trust in bad outputs. Weakly tuned machine learning models can create false positives, miss real issues, or produce advice that sounds confident but is simply wrong. In security, that can waste analyst time, hide real cybersecurity threats, or lead to messy security breaches driven by bad judgment and human error.

Recent published examples make this more concrete. In November 2025, Google reported that threat actors were already using Gemini for research, troubleshooting, scripting, and content generation rather than some magical push-button attack flow. By February 2026, Google said those actors were increasingly integrating AI into reconnaissance, social engineering, and malware development.

So yes, gen AI can help defense. But it can also help attackers sharpen sophisticated cyberattacks if organizations skip the boring controls.

Artificial intelligence in cyber security still needs human oversight

This is the least flashy part of the story, but it matters most. The safest AI cybersecurity setup is not “replace the analysts.” It is “use AI for speed, keep people for judgment.” NCSC says security must be a core requirement across the AI lifecycle, and that keeping AI systems secure is as much about process, culture, accountability, and leadership as it is about technical controls.

A practical approach looks like this:

- Keep human oversight on high-impact calls. Let AI draft, rank, and suggest. Let people approve blocking, containment, takedown actions, or sensitive communications.

- Put clear rules around AI tools. Staff should know what can and cannot be pasted into public systems, especially when sensitive data, customer data, or internal code is involved. This is one of the simplest security measures, and one of the most ignored.

- Test the model like any other system. If you do not test for manipulation, leakage, and weird edge cases, you are just guessing.

- Train the team, not just the model. A weak team creates human error around prompts, trust, and access. A strong team knows when AI is being useful and when it is bluffing. That makes a real difference to your overall security posture.

That is the real lesson here. Cyber defense gets stronger when AI supports people, not when people stop thinking.

Why VeePN still matters in a world of cybersecurity AI

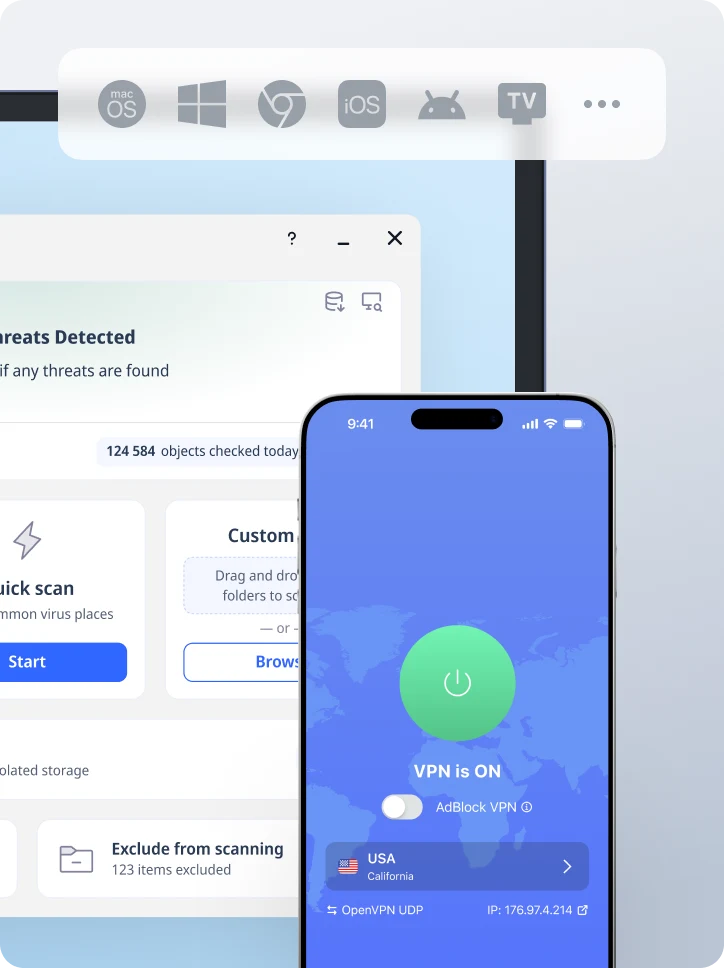

Even with a real strong cybersecurity AI, some risks remain very practical. AI can help with triage and threat intelligence, but it cannot protect your traffic on public Wi-Fi, hide your IP address, prevent DNS leaks, or warn you about exposed credentials. That is exactly why VeePN is still useful.

- AES-256 encryption. VeePN says its service uses AES-256 encryption to protect your traffic. That matters when you are working on public networks and do not want exposed traffic turning into an easy win for attackers.

- IP address changing. VeePN routes traffic through 2,600+ servers in 85 countries, which helps mask your real IP and adds a privacy layer around your daily browsing and remote work.

- Kill Switch. VeePN includes an automatic Kill Switch, so if the VPN drops, your traffic does not quietly spill onto an open connection. This is simple, but it matters.

- DNS leak protection. VeePN says it routes DNS queries through an encrypted tunnel and keeps them from reaching external DNS providers. That helps reduce leakage of browsing activity and destination data.

- NetGuard. VeePN NetGuard blocks malicious websites, trackers, and intrusive ads. That is useful when phishing pages and fake login flows are getting more convincing thanks to AI-generated content.

- No Logs policy. VeePN says it keeps zero browsing, DNS, or search logs and presents this as an independently audited no-log approach. If privacy is part of your security posture, that matters.

- Breach Alert. VeePN Breach Alert monitors for leaked credentials and sends alerts when your data appears in a breach. That gives you a faster chance to reset passwords and lock things down.

- Up to 10 devices. VeePN lets you protect up to 10 devices with one account, so you can cover your laptop, phone, tablet, and other everyday devices without juggling separate plans.

Try VeePN if you want extra protection around phishing, public Wi-Fi, exposed DNS requests, and leaked credentials while the AI threat landscape keeps shifting. We offer it with a 30-day money-back guarantee.

FAQ

Yes. Generative AI in cybersecurity can help with threat detection, alert triage, report drafting, incident response, and reducing some false positives. It works best when it supports analysts instead of replacing them. Discover more in this article.

The so-called “30% rule” in AI is usually rather about an idea than a formal and widely accepted cybersecurity standard. People use it to say that AI can take over a fair share of repetitive work, while humans still handle review and final judgment. In security, that balance matters because human oversight is still essential.

The biggest generative AI cybersecurity risks include sensitive data leaks, prompt injection, poisoned training data, better phishing attacks, and weak outputs that create false positives or miss real threats. Discover more in this article.

At least not in the near future. AI really can speed the processes up, handle routine tasks, and help with threat hunting. However, human cybersecurity experts are still needed for judgment and final decisions.

VeePN is freedom

Download VeePN Client for All Platforms

Enjoy a smooth VPN experience anywhere, anytime. No matter the device you have — phone or laptop, tablet or router — VeePN’s next-gen data protection and ultra-fast speeds will cover all of them.

Download for PC Download for Mac IOS and Android App

IOS and Android App

Want secure browsing while reading this?

See the difference for yourself - Try VeePN PRO for 3-days for $1, no risk, no pressure.

Start My $1 TrialThen VeePN PRO 1-year plan